This is among the most important things I’ve ever worked on. That said, “wouldn’t be where I am now without this” sounds so goofy. Look at where I am, it blows, lmao. As always with hackathon stuff, this is a truncated version of what I originally published for $$$. — 02.16.2024

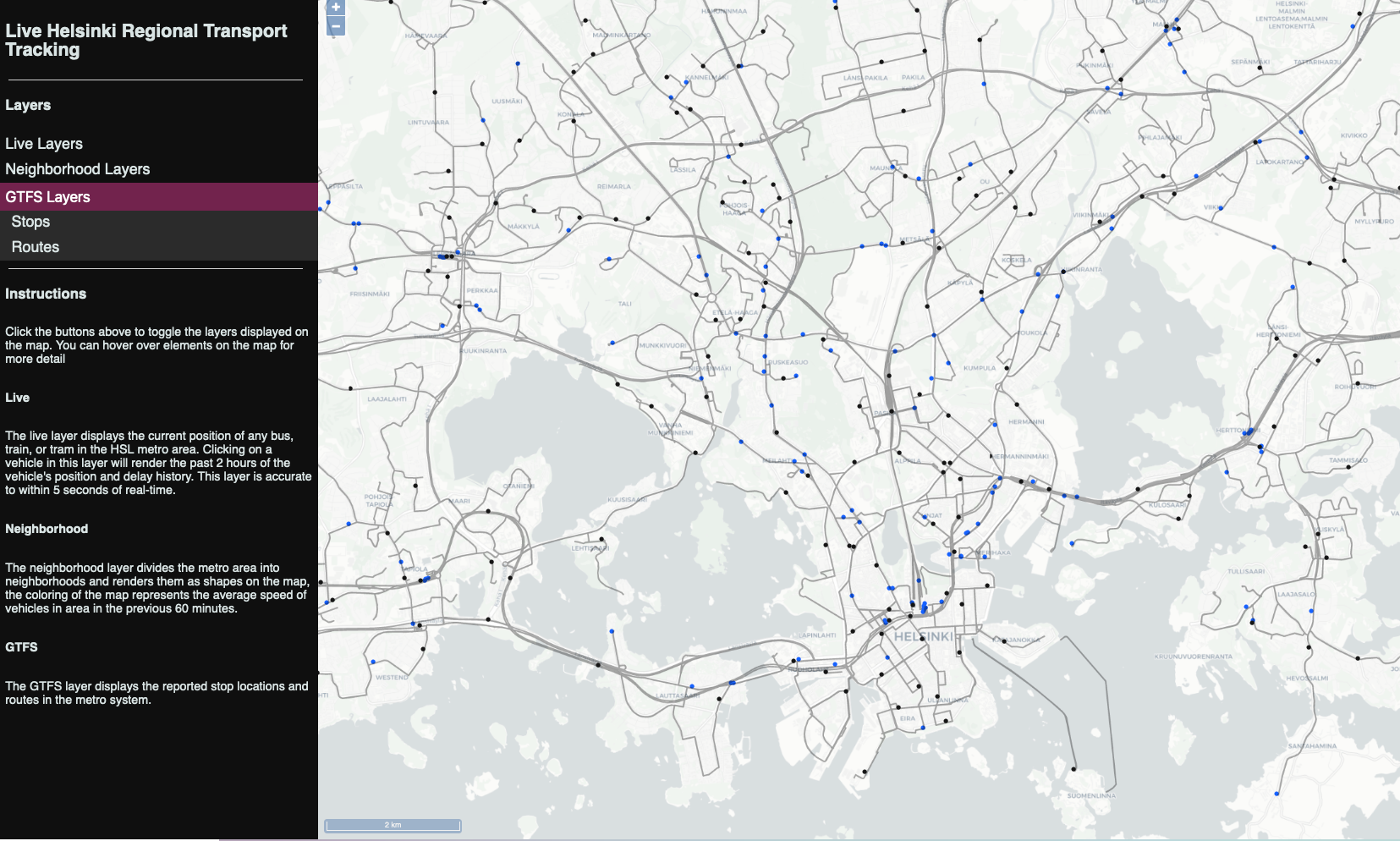

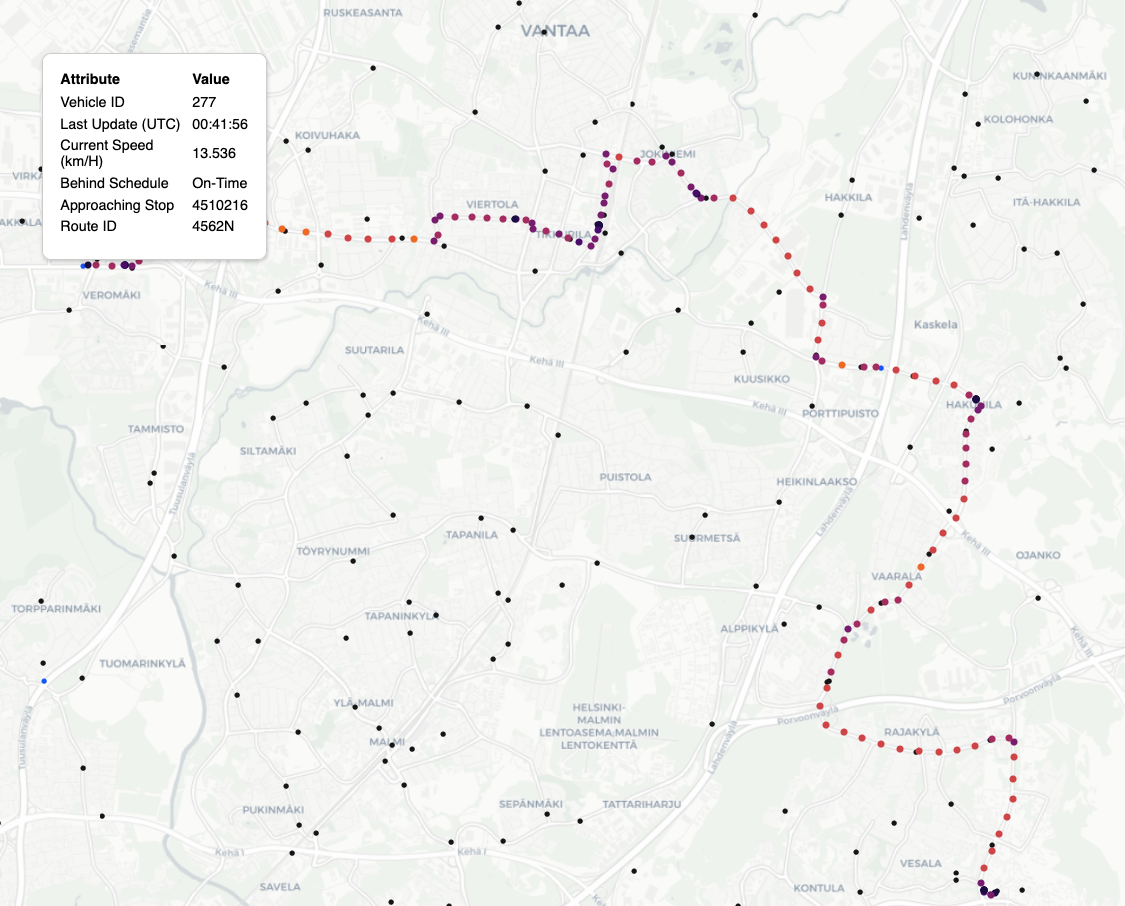

This project publishes realtime locations of municipal transport vehicles in the Helsinki metro area to a web UI. Although Helsinki offers a great realtime API for developers, there is no site that makes this data generally available to the public. Given that HSL publishes on the order of ~50 million updates per day, I felt that Redis TimeSeries could be a great tool to to quickly aggregate tens of thousands of points. Data is sourced from the Helsinki Regional Transit Authority via a public MQTT feed. Incoming MQTT messages are processed through a custom MQTT broker that pushes them to Redis. More about the real-time positioning data from the HSL Metro can be found here.

— UI with GTFS (black) and vehichle (blue) layers enabled.

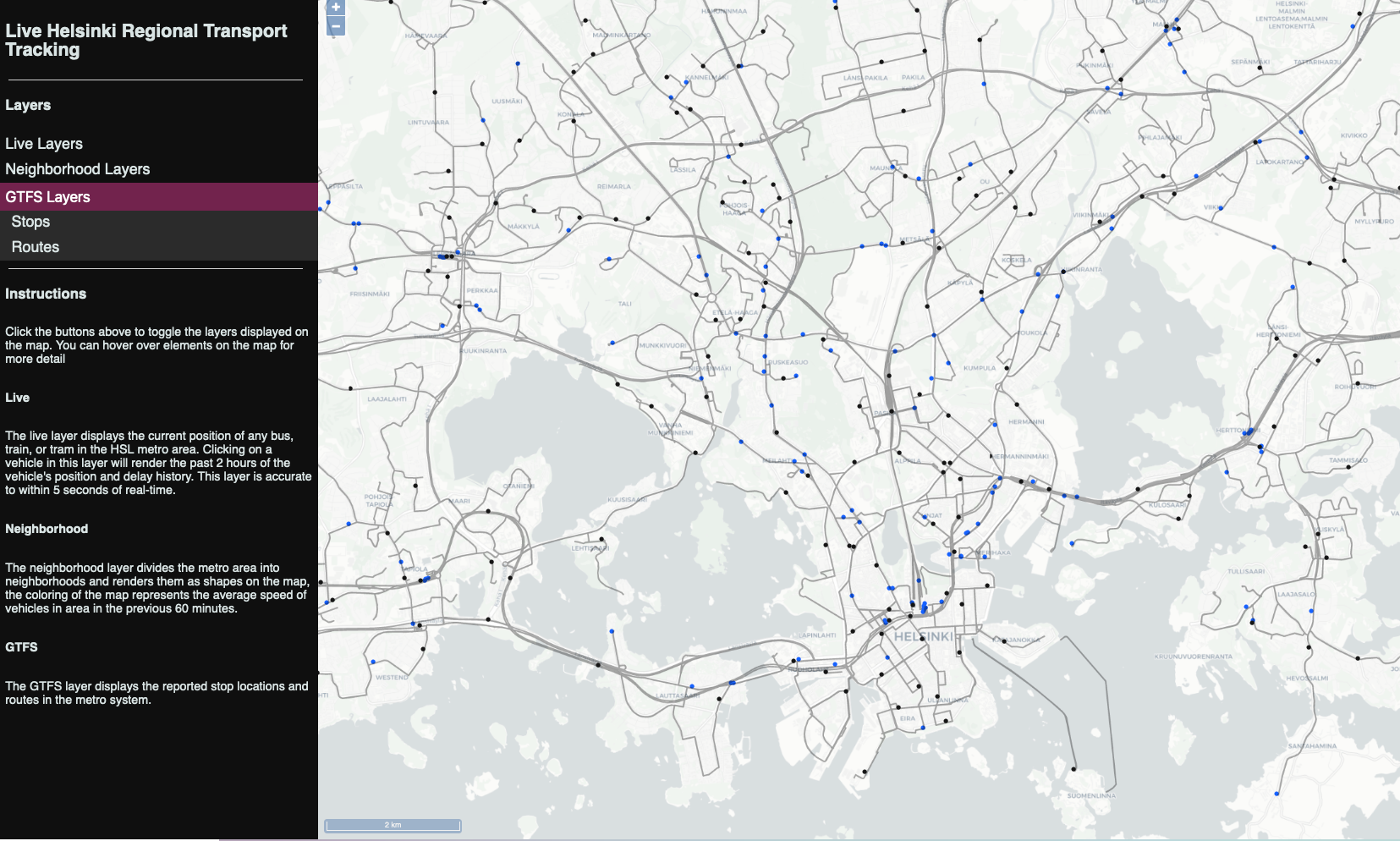

— UI with the trip history layer and tooltip showing details of vehicle’s current status. Coloring maps to vehicle’s speed.

All components of the app are hosted on single AWS

t3.mediumwith agp3EBS volume. Thet3.mediumwas appealing because we can burst CPU performance for rush-hour. I’m obviously playing with fire here, no concessions have been made for uptime, availability, etc. If it goes down, then it’s just down for a little bit. Thanks to some tweaks, this configuration is unlikely to fall over as is, but it took some trial and error.

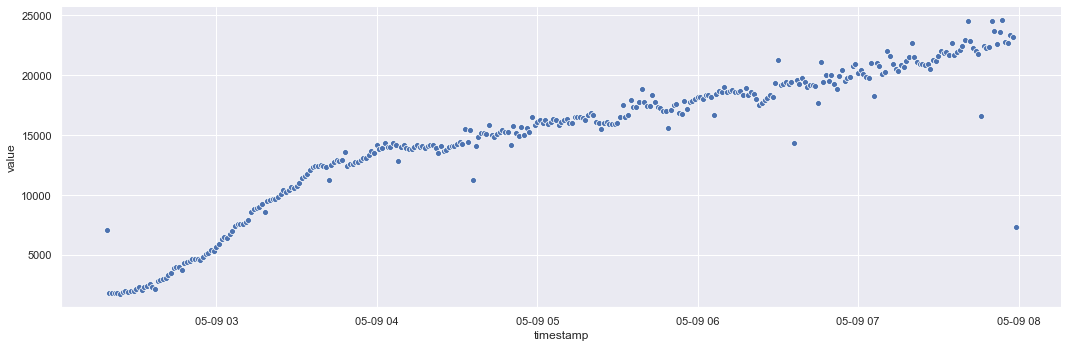

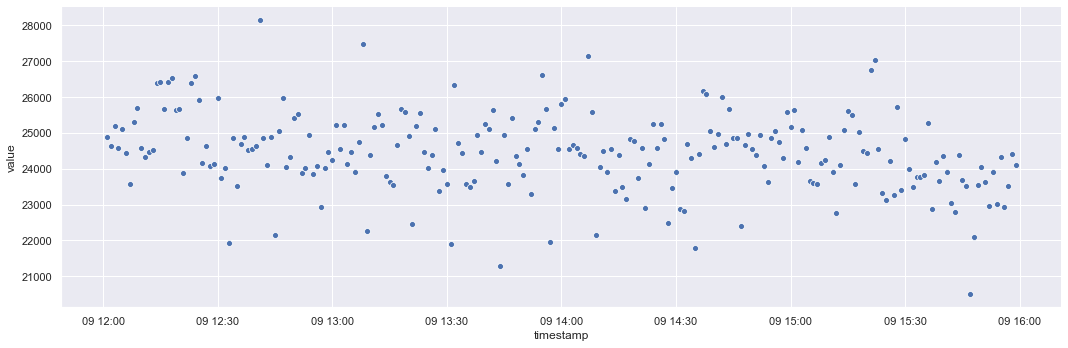

— This system was not designed for performance. However, it performs surprisingly well even during peak travel hours. The following charts display the rise in event throughput on a Sunday morning into afternoon and evening. Notice that towards the middle of the day the system tops out at ~25000 events/min after growing steadily from ~1000 events/min early in the morning. On a weekday morning at 8AM, we can check the DB and see the system handling ~100,000 events/min. Not bad!

SELECT NOW(), COUNT(1)/300 as eps FROM statistics.events WHERE event_time > NOW() - interval'5 minute'; now | eps -------------------------------+------ 2021-05-14 05:06:28.974982+00 | 1648

— In testing I noticed the most stressed part of the system was disk, not CPU as I had originally suspected. I thought the broker would struggle with thousands of messages a second, but it did OK. This is partially because I did a little premature optimization with ffjson and that improved message parsing performance.

The docker stats from a weekday morning below. Notice

that CPU is fine, and because of the TTL on all keys, memory usage stays

fairly constant ~1GB at all hours of the day.

CONTAINER ID NAME CPU % MEM USAGE / LIMIT MEM % NET I/O

6d0a1d7fab0d redis_hackathon_mqtt_1 24.02% 10.71MiB / 3.786GiB 0.28% 32GB / 60.4GB

833aab4d39a8 redis_hackathon_redis_1 7.02% 862.7MiB / 3.786GiB 22.26% 58.8GB / 38.9GBUpgrading from AWS standard gp2 EBS to gp3

EBS volumes allowed me to get 3000 IOPs and 125MB/s throughput

essentially for free. Without the upgraded disk, the service still

functioned OK, but tile generation was slow and could lag for

10+ minutes at rush hour. Prior to upgrade, disk queues were very

long due to the constant write-behind from Redis Gears. With this

infrastructure update, even during rush-hour tile regeneration

%iowait stays low. Consider the following results from

sar during an hourly tile regeneration event:

CPU %user %system %iowait %idle

20:00:07 all 9.98 9.88 47.08 32.56

20:00:22 all 10.22 12.04 41.70 35.22 # PostGIS calcs - i/o heavy, but OK

20:00:37 all 10.46 10.66 61.73 16.95

20:00:52 all 34.89 11.97 34.48 18.56

20:01:07 all 8.00 8.51 55.59 26.97

20:01:22 all 32.93 8.13 26.42 32.42 # Tile gen. - %user heavy, but OK

20:01:37 all 48.94 10.90 21.29 18.87

20:01:47 all 7.19 4.39 5.89 81.24 # Back to high %idle, job complete